In this article I will show you how to create SharePoint 2010/2013 Search Content Sources with a handy powershell script and why you should care.

This is my 100th blog post and therefore it has to be something with SharePoint Search - something good. I had the idea for the script on my mind for quite a while now, but there was no project and no time to create it - until now.

The Problem

During my consultant work I see quite a lot of different SharePoint environments and 90% of the Search Service Applications look like this before I start working there:

What is the problem you may ask? I see quite a few. Most intranets I see are rather big and have different sections/departments/regions within their portal - with different requirements of course. Some aggregate their content via Search (aka Search driven applications) and some don’t - some upload documents, some only use SharePoint as archive - as flexible as you can use SharePoint and as different as the requirements can be you should adjust your Search Content Sources accordingly - because everything you configure there will be in the Search Index - everything else wont.

Everything you configure in the SharePoint Content Sources will be in the search index - everything else won’t!

So what is the problem with one content source?

You are not flexible - no different crawl schedules, no priorities - just one setup to cover everything. How about different settings for DEV / QA systems ? How about different crawl schedules for people search? LOB / BCS, external systems? Or people complain that some results appear too late in the search or that they are not there at all? Read on!

The Solution

You can create content sources with PowerShell - that’s a good thing and enables us to automate it. So the easiest way to create a Content Source would be (except creating them by hand in the central admin) with this one-liner:

New-SPEnterpriseSearchCrawlContentSource -Type SharePoint -name "Content Source 1" -StartAddresses "http://sharepoint" -SharePointCrawlBehavior CrawlVirtualServers

You will be asked for the name of the Search Service Application and then it creates the Content Source for you - good, simple and works. But apparently no crawl schedule. And imagine creating that for 16 different content sources with different crawl schedules and what not. And maintainable and readable it should be, too. So we need a more sophisticated script for that with reproducible results - in other words we need a xml config file and a powershell with the logic.

My script consists out of two files - one is the actual powershell with all the logic and the second is a xml config file with all the parameters - lets have a look inside the xml file.

XML Config File

In the 3rd line you have to specify the Search Service Application in my case this is “Search Service Application”.

In line 13, 46 and 81 I configure three different content sources - if you want to create more, then you only have to copy one block and maybe for the ease of use the comments surrounding the block starting with

Every

In every

Then every

The script

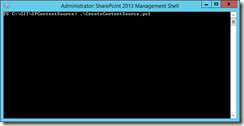

You don’t have to read the script or customize it - everything is configured in the xml config - the script just reads the xml file that must be in the same directory. The script has no parameter but must be run in an elevated PowerShell on a SharePoint Server.

.\CreateContentSource.ps1

Running the script with the provided xml would give you the following output:

Search Service Application: Search Service Application - exists

SharePoint 2010 (QA)

Content Source: SharePoint 2010 (QA) already exist.

Url: http://sharepoint2013

Schedules:

ScheduleType: Full

Schedule: Weekly

Days: Saturday

Startdate: 06:00

ScheduleType: Incremental

Schedule: Daily

Run every: 1 day(s)

Repeat Interval: 30 minutes

Repeat Interval: 1440 minutes

SharePoint 2007 (productive)

Content Source: SharePoint 2007 (productive) already exist.

Url: http://sharepoint2007/sites/test1

Url: http://sharepoint2007/sites/test2

Url: http://sharepoint2007/sites/test3

Schedules:

ScheduleType: Full

Schedule: Weekly

Days: Saturday

Startdate: 10:00

ScheduleType: Incremental

Schedule: Daily

Run every: 1 day(s)

Repeat Interval: 30 minutes

Repeat Interval: 1440 minutes

Fileshare

Url: \\filer\folder

Name Id Type CrawlState CrawlCompleted

---- -- ---- ---------- --------------

Fileshare 19 File Idle

Schedules:

ScheduleType: Full

Schedule: Weekly

Days: Saturday

Startdate: 10:00

ScheduleType: Incremental

Schedule: Daily

Run every: 1 day(s)

Repeat Interval: 30 minutes

Repeat Interval: 1440 minutes

done.

This will result in the following configuration in the central admin:

Important: This script does not remove or rename content sources (it simple can’t detect those changes)! If you want to rename an existing content source you can either delete the content source in the central admin or rename it there and in the xml file.

Important: Please keep in mind that deleting a content source or changes source addresses within a content source deletes items within your index! There will be an automatic cleanup of the index once you remove urls from a content source and you have to recrawl the items if you still need them!

If you change the parameters in the script, e.g. the crawl schedule and run the script again - the script will update the content source for you. So if you have a different environment with the same configuration, just copy both files - if they are different, you have to adjust the config file accordingly.

How to get the script

I released the script based on the MIT license in this GitHub repository:

https://github.com/MaxMelcher/SPContentSource

Feedback / next steps

Some parts of the script are in a very rough state - I will update the script very soon because I need it in a project. Some of the next steps are:

- Handle priorities

- Support continuous crawl (SP2013 only)

- Implement BCS and other types

- Add some more logging

- Add some error handling

- Add crawl rules

If you encounter any bug or problem please drop me a line or open an issue here. Contributions and pull requests or any other feedback is much appreciated.

Share this post

Twitter

Facebook

LinkedIn

Email